Update-Friendly Bitmap Indexing

To address this problem we study the design principles of bitmap indexing taking into account all prior approaches and we identify and quantify three bottlenecks when updating: (i) decode and re-encode when updating in-place, (ii) update pressure on a single auxiliary bitvector when enabling out-of-place invalidation, and (iii) repetitive bitwise operation with the auxiliary bitvector to re-evaluate the value positions of the bitmap index. We introduce three new design principles to mitigate these problems.

UpBit buffers updates in auxiliary bitvectors, which distributes the update pressure to multiple bitvectors to avoid the problem of directing all updates to a single bitvector.

UpBit uses localized decoding without the need to decode the full bitvector when merging the buffered updates with the value bitvector.

Finally, UpBit follows the workload to adaptively merge the updates with value bitvectors, avoiding repetitive bitwise operations and keeping the buffer size small.

UpBit in action

Putting together the three design elements we build UpBit, a scalable in-memory udpatable bitmap index, which offers very fast updates (several orders of magnitude faster than prior approaches), with competitive read performance.

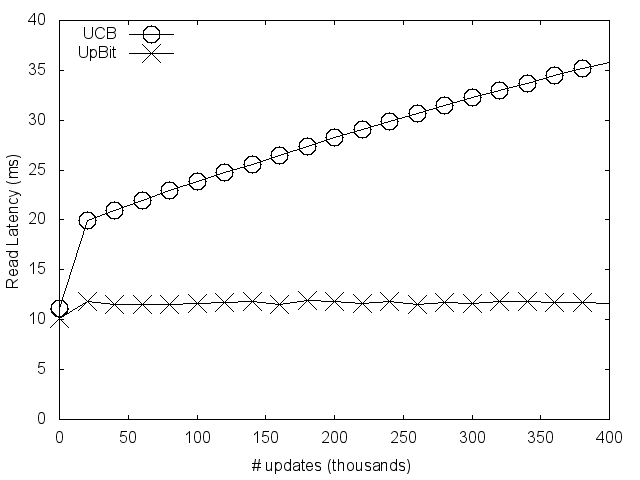

Ιn a workload with a mix of reads and writes, UpBit, contrary to state-of-the-art sees no additive cost, and delivers stable performance.

Ιn a workload with a mix of reads and writes, UpBit, contrary to state-of-the-art sees no additive cost, and delivers stable performance.

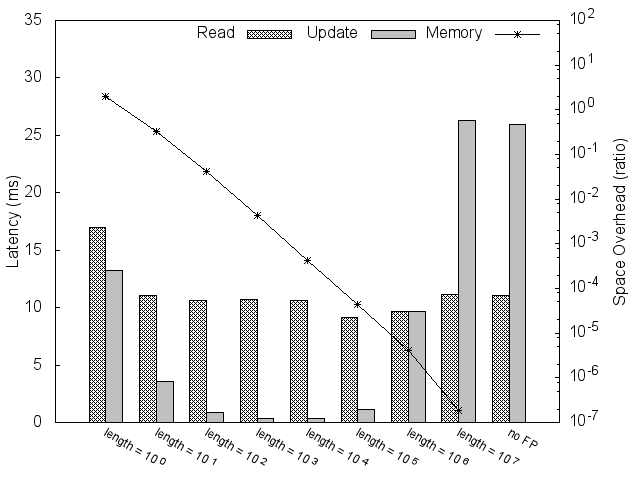

UpBit can navigate a trade-off space where read performance can be exchanged for update performance, while clever use of meta-data can better balance reads and writes.

UpBit can navigate a trade-off space where read performance can be exchanged for update performance, while clever use of meta-data can better balance reads and writes.